- Blog

- Brittney palmer playboy pictures

- Big fish games app iphone

- Hindi x

- Samson q1u microphone review

- Intel core i5 2450m vs intel core i5-8250u

- Serial experiments lain english dub episode 1 kissanime

- Open katalon studio in chrome katalon

- Download mocha pro

- Dmr programming group list

- List of all 1001 arabian nights stories

- Navionics hotmaps platinum south

- Bosch esi tronic 2013 i-key

That would displace time for achieving the tester’s presumable mission: finding deep problems in the product she’s testing. Based on my out-of-the-box experience, I would anticipate that any tester working with this tool would spend enormous amounts of time and effort working around its problems and limitations. It’s possible that everything smooths out after a while, but I have no reason to believe that. Are the people developing Katalon Studio using the product to help with the testing of itself? Neither a Yes nor a No answer bodes well. This is particularly odd, since one would expect a testing tools company, of all things, to produce a well-tested, stable, and polished product. In my opinion, Katalon Studio has not been competently, thoroughly, and broadly tested or if it has, its product management has either ignored or decided not to address the problems that I am reporting here. However, I’m not being compensated for this, and I’ve encountered a blizzard of bugs in a very short time. Were I motivated, I could invest a few more hours, develop a coverage outline, and perform deeper testing on the product.

That’s an important thing for testers to note: investigating and reporting bugs, and preparing test reports is important, but presents opportunity cost against interacting with the product to obtain deeper test coverage. Writing all this up takes considerably more time. The actual testing here took no more than an hour, and most of that time was consumed by sensemaking, and reproducing and recording bugs. This kind of shallow testing could be okay, as far as it goes, if it were inexpensive and non-disruptive, and if the tool were stable, easy to use, and reliable-which it seems not to be. The motivating idea seems to be recording and playing back actions, checking for the presence of on-screen elements, and completing simple processes.

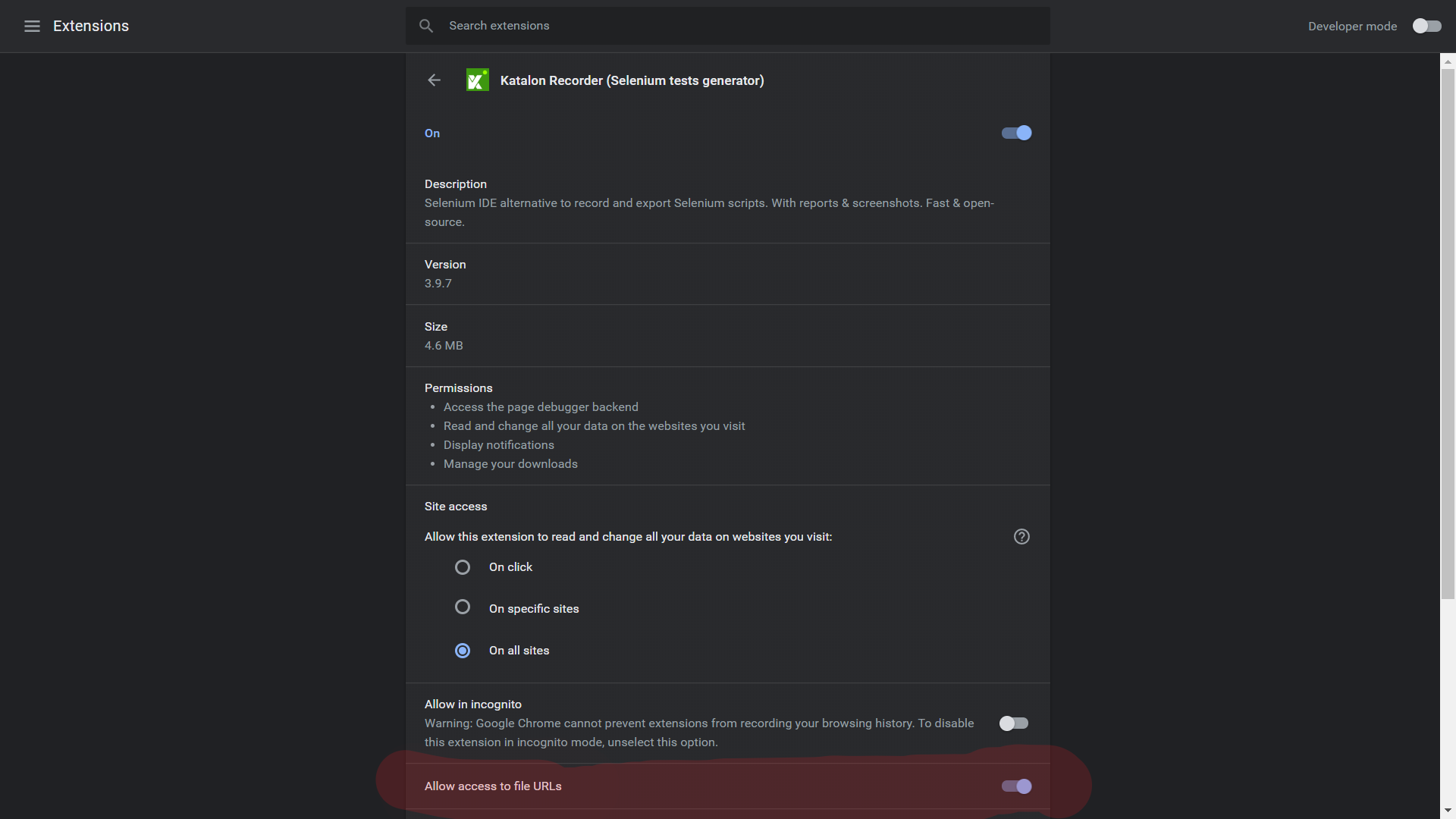

I did record a brief video that appears below.īoth the product’s design and documentation steer the user-a tester, presumably-towards very confirmatory and shallow testing. As with mabl, I was encountered enough problems on first encounter with the product that it swamped my ability to stay focused and keep track of them all. I stumbled over several problems that are not included in this report, and I perceive many systemic risks to be investigated. In each case, Katalon’s recording functions failed to record my behaviours reliably. I pointed Katalon Record to three different Web applications. My first impression is that the tool is unstable, brittle and prone to systematic errors and omissions.Ī very short encounter with the product reveals startlingly obvious problems, including hangs and data loss. The idea behind sympathetic testing is not to find bugs, but to exercise a product’s features in a relatively non-challenging way. It’s brief, shallow testing to determine whether if the product is fit for deeper testing, or whether it has immediately obvious or dramatic problems.

#Open katalon studio in chrome katalon verification#

Sanity testing might also be called “smoke testing”, “quick testing”, or “build verification testing”. It provides a foundation for effective, efficient, deliberative, deep testing later on. Survey testing tends to be spontaneous, open, playful, and relatively shallow. The general mission of survey testing is learning about the design, purposes, testability, and possibilities of the product. My self-assigned charter was to explore and survey Katalon Studio, focusing on claims and identifying features in the product through sympathetic use.Īs before, I will include some meta-notes about the testing in indented text like this. This time, the application is Katalon Studio. Last time, the application under test was mabl. The work was performed in September 2021, with follow-up work November 3-4, 2021. This is an experience report of attempting to perform a session of sympathetic survey and sanity testing on a “test automation” tool. But hey, it’s worth it, right? Introduction